Looking backwards to go forward — words from talks in late 2020

The following is a rough amalgam of several different talks that I’ve done between October and December 2020 that have skirted around the challenges for digital staff and skills in museums.

These talks have tried to explore the idea that its very different now, to one decade a go, and to two decades ago. The complexity of systems, technologies, and their baked-in politics is even greater and more opaque; and the in-house skills, deep craft, and specialised staff resources have dwindled at the very same time they are most needed. This is not just a ‘museum problem’ but common across all the culture industries, public sector, and dare I say, the business world too.

It isn’t easily ‘fixed’.

What follows is a long-ish read so I’d suggest popping on Kraftwerk’s classic 1981 album Computer World as a sonic accompaniment.

In most of my recent talks I had been initially going to speak about some quite specific new things — the new work at ACMI, the challenges of AI and machine learning in museums — but I ended up reading a book of interviews with old videogame designers called Retro Tea Breaks by Neil Thomas. It was a project I had backed on Kickstarter without much of an idea about how it would turn out.

Featuring longform exchanges with game designers and programmers from the 1980s and 1990, I was struck by how much arcane technical knowledge was needed to make the home computing hardware of the time do what was desired. To get those early home computers to do what a game designer wanted them to do required a lot of ‘in the weeds’ hacks and workarounds — CPU speeds were very limited, onboard memory was tiny, magnetic storage media like cassettes and floppy disks were slow and small, and sound and graphic capabilities were very limited. For those working as narrative designers, graphic artists, or sound composers these technical limitations worked as focal points for their creative practice. Add to that the very real temporal and geographic limitations of knowledge sharing, and the specific contexts of production — local hubs of hobbyists and enthusiasts, local markets, local relationships with domestic trade press and distribution — become incredibly important in understanding how these software creations came into being. Reading the interviews it also clear to see how easily this era of home computing can be reduced to the stories of a few heroic individuals struggling against the odds — rather than looking at the context and communities that these individuals were a part of. It is only through reading many different perspectives of the same period that an appreciation of the importance of both formal and informal networks emerges. (I am also looking forward to Melanie Swalwell’s upcoming book on Australian game designers from the same period which is out in 2021)

Reading these oral histories it reminded me of how little the museum sector tells, or records, its own stories. And how, with the pandemic, this has heightened the stakes. Museum technology used to be optional. For a medium or large sized museum it no longer is. It is essential as plumbing.

This is a heavily truncated, biased journey from 2000 to the present — a bit of a potted and biased history of museums and their interactions with the web, and the effects of this on museums. And what this might mean for this small moment of fracture and opportunity that is emerging out of the destruction of COVID-19.

Sometimes I look back a little saddened by how little progress it feels has been made. In fact I might say we’ve gone backwards. I’ve had the luxury of working with different people from across Australia, New Zealand, US, Canada, UK, Europe, Taiwan and Singapore — and this has exposed me to a lot of different ways of thinking about and approaching the same issues and challenges.

The idea of a ‘multiplatform museum’ is not a new concept — even though the technologies which enable specific ‘multiple platforms’ continue to change.

The original example of ‘multiplatform’ museum media was probably the exhibition catalogue — driven by changes in publishing technologies. Of course, the museum label itself dates back centuries. And as we learned from Loic Tallon back in 2014, the first museum audioguide can be traced to Amsterdam in the late 1940s. And museums have long collaborated with radio stations well before the MACBA in Barcelona launched its excellent net radio initiative Radio Web MACBA in 2006.

Late in the 00s Susan Chun and others from the North American museum technology community were trying to compile a living archive of museum technology projects. It was modeled loosely on the ExhibitFiles platform that emerged from the science center/science museum world funded initially by National Science Foundation. Neither project is sustained today and ExhibitFiles provides only a small snapshot of a decade. So these days it is only through conference archives and awards that a potted history of the last twenty years of the field can be revealed — Museums and the Web remains a vast treasure trove of ‘productive failures’, whilst AAM’s Media & Technology awards cover some of the more sparkly projects. Being conference-oriented, these inevitably bias toward the institutions that have had ‘conference budgets’ — well funded North American and Western European institutions. But something is often better than nothing.

Computers have slowly crept into museums over the last 60 years and there are at least three different and intersecting ways in which computing came into the core of what museums do.

Firstly, in the 1960s, parallel to the computerization of library catalogues, computers began to be used to augment collection cataloguing. This era was driven by the technical skills and interests of museum registrars, librarians and archivists. A much cited conference report from the Computers and Their Potential Applications in Museums event at the Met in 1968, supported by IBM, is remarkable reading. Much like Doug Englebart’s ‘Mother of all demos’, also in 1968, this event was prescient in unexpected ways. Much of the same opportunities and challenges remain pretty much exactly as described back then.

From the the Met Director, Thomas Hoving’s 1968 foreward,

“There are going to be problems and growing pains. One of them already is money, the high and probably spiraling costs of maintenance and upkeep. Another is technological change and the specter of obsolescence. Will all our systems be compatible, and will those developed in 1970 be compatible with what the year 200 will bring? There are infinite variables to juggle, and that most elusive of qualities, the constant K to search for. Can we train the people to do the job, and will there be anyone qualified to judge the results? And will we have the restraint and intelligence not to go off on a mad, senseless orgy of indiscriminate, nit-picking programming?”

The prevailing ethos during this era was about the computerization of a field that arrived in lockstep with the beginnings of the professionalization of museums. Computer systems were expensive, networks were predominantly local and databases were simple. As such, computerization was only available to a small group of university museums and the biggest institutions.

The legacy of that work still can be felt today in the default position for museums to ‘put their catalogues and collections online’.

Second, during the 1980s and especially the 1990s, interactive experiences especially in science museums bloomed but in other museum types, computers were still mostly back of house but had begun to extend into financial management.

Thirdly, and in parallel to all these phases, were artists working with computers, network technologies, what later was called ‘multimedia’, and with the arrival of the web, ‘net art’. Curators and public programming staff came into contact with computer technologies through all three of these — collections, gallery interactive experiences, and artists.

The web changed this significantly. But it took a little while.

When I started work in museums it was still the pre-social web era — the peak era of ‘websites’. Search was in its infancy and the business models of the web had not been codified. The technologies and tools of the un-networked CDROM era were still dominant and making websites sometimes still could be done without even considering a database driven content management system.

At the Powerhouse Museum during this time we were making projects in raw HTML, Coldfusion and Flash, and in-gallery interactive were made with Director, a spill over from the CD ROM authoring era. Towards the end of this period, Coldfusion was switched out for PHP.

Success was measured in very basic web metrics — also very much in the early days of analyzing server logs with WebTrends and museums still reported ‘hits’ — we weren’t even on to reporting sessions or visits! As the ad-tech industry fired up after the Dot Com Crash, metrics would become more sophisticated and complicated with a shift to analytics-as-a-service and early Google Analytics. Even early on, though, it was very valuable for those looking at the endless permutations of charts, graphs and tables that these analytics tools made possible, to have a technical understanding of how websites and their composite webpages and web elements were served by a server. Without that technical understanding it was easy to be seduced by chart junk.

This pre-social era took what had already been digitised or recorded into a database and pushed it out to the web. From the local network to the internet. There was never a sense that this work would be equivalent to, or as wide reaching as actual visitors. And in my experience there was genuine surprise when this exposure generated real community interest — from both amateurs and scholars.

During this period the Powerhouse was hosting AMOL, Australian Museums OnLine, a national portal for cultural heritage practitioners and a means to search across some collections. This was before Google indexed, albeit poorly, museum catalogues so AMOL and its counterparts around the world like Canada’s CHIN and Virtual Museum of Canada were the beginnings of public facing cross-organisational collection search. My former boss, Kevin Sumption’s paper from Museums and the Web in 2000 describes them as,

“The third emerging meta-center typology I have identified is that of the Professional meta-center. Here the term ‘professional’ describes the primary audience for whom these sites were designed. In the case of AMOL and CHIN, those working directly in paid or unpaid heritage positions. This is not to suggest that professional meta-centers are not public-friendly, however there is little doubt that the information architecture, search format and content is intended primarily for users from the heritage sector.”

Next came the social era which began as the museum collections that were put online began to get traction. Museums experimented with Flickr and then George Oates launched Flickr Commons, and with Wikipedia — Liam Wyatt kicking off GLAM Wiki and the idea of ‘Wikipedians in residence’. These were both seen, in the field, as ‘radical practice’ back then. Museums experimenting on, and with, social media platforms drew on technical staff as well as museum educators and it was still a few years until social media in museums would become largely confined to a narrower definition of ‘social media marketing’.

As more advanced technologies became accessible as web services, museums began to experiment with early attempts at recommendation systems, computer vision, semantic analysis. Quite a number of the technologies that museums are excited about in 2020 — computer vision, text mining, semantic analysis — actually go back two or more decades.

This early period of web services began to marginalize conventional enterprise IT teams inside the larger museums, cutting them off from the more nimble web/digital teams, as well as from the emerging digital marketing initiatives. IT was lumbered with legacy systems that were complex and deeply entrenched making any minor change take an unforgivable amount of time to make. Whilst the web teams were able to quickly build tools to quickly transform data through third party online services, IT teams were often stuck for months upgrading the finance system.

Museums began to launch their own APIs, buoyed by the success that they had had using commercial APIs — again, Flickr’s influence was strong. Those museum APIs became soft IT infrastructure.

There was a strong awareness of the tectonic shifts this would bring in terms of skills. Tim Hart and Martin Hallett in 2011 wrote a robust history of the Australian museum technology community and its then ground-breaking work, cautioning,

“The extensive use of technology placed new demands on museum management. Information technology departments were established in Australia’s larger museums in the early 1980s. Along with new requirements for public accountability and regulatory compliance, the backup of digitised information has become a significant responsibility and risk-management issue. These developments have profoundly reshaped the skills that museums need to recruit and cultivate in their staff.”

This period took the gains of the previous era- the potential for wider audiences — and ran with it. When early social media marketing in museums began, for a lot of institutions this was their first real taste of transactionally-ruthless ‘commercial marketing’ rather than the vaguer ‘arts marketing’.

There was even more optimism that opening up museums to the much wider community of the internet would be a unreservedly good thing. That turned out to be misplaced, especially as more and more of the world got online, along with more of its seemingly intractable social problems.

The social era was surprisingly short-lived. It was quickly subsumed into a much larger shift into mobile — mobile being by its very nature, social. This shift was so rapid that there was a sizeable trend away from building internal capacity to outsourcing to cope with lack of app coding skills and lack of design skills. Museums couldn’t keep up — coming off the tailend of the Global Financial Crisis, exhibition attendances were (oddly) booming — and technology was just one of several challenges museums faced.

For a few years, perhaps from 2009–2012 websites were largely forgotten as app-fever took hold. The switch in focus to outside the institution, and these shiny new input devices, happened far too quickly for museums to adapt.

As a result this is when ‘projects’ exploded — and museums went mad for mobile apps. They weren’t alone.

Turbocharged by the rapid proliferation of mobile smartphone internet connections, each tied to a single human user (rather than the shared connections of a home computer), the reach of the internet grew enormously. However museum projects never managed to reach new audiences at the same rate as new users around the world went online. Seeing the potential, perhaps even an opportunity, museums began to create or hire Chief Digital Officer roles.

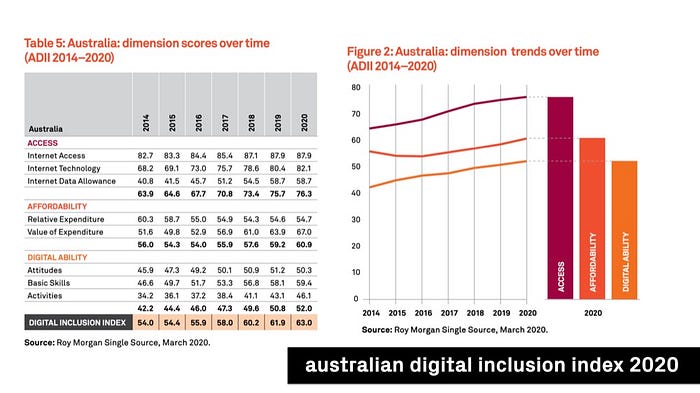

As is clear from innumerable reports into internet access and digital literacies over the last decade, internet access is far from evenly distributed. The Australian Digital Inclusion Index charts the rise of internet access along with affordability, and what they term ‘digital capability’. The shift to mobile brought many people their first access to the internet but with much more expensive data plans although by 2020 both speeds and prices have improved. Digital capability, however, has barely increased — so whilst there are more users, there are also more less digitally capable users. Not unexpectedly those on low incomes, Indigenous, remote, and elderly report the lowest levels of access, affordability, and capability.

During this decade ‘user experience’ entered more common museum parlance. But this focus on user experience, especially in the mobile and post-mobile era, has been complicated by its focus on individuals — a single user’s journey on a device, rather than a collective endeavour. UX is now too often tied closely with an individual user rather than group outcomes. Outside of a wider and more engaged design-literate organizational culture this has led some teams and projects down narrow and ultimately unsuccessful paths. Optimizing for ‘transactional efficiency’ might be great for a company chasing its quarterly earnings target but can be terribly misdirected in the longer purpose-driven arc of a museum interaction. It wasn’t so much that techniques and strategies were transplanted from the playbooks of enormous for-profit corporations into museums, but that museums lacked the necessary understandings of why and how they differed from those corporations.

With the rapid growth of user experience, the necessary outsourcing resulting from the rapid growth of mobile, the explosion of social media as marketing, and the emergence of ‘content marketing’, there was an influx of non-technical people into technology-related roles in the cultural sector.

Under normal circumstances this would have worked out well — bringing more diverse skills to complement existing technical expertise. For large museums, social media managers and content marketers became a highly valued part of a museum’s marketing mix, helping them connect to wider audiences. Highly skilled at digital comms (and defusing difficult customer complaints!) these roles have continued to be important and valuable. These roles are skilled users of social platforms and it is unrealistic to also expect them to be experts at designing social platforms, software development, or even having a critical understanding of the rapidly changing political economies of the platforms that they are users of. Nevertheless, this is what happened.

As the 2010s rolled on museum budgets began to be cut. Philanthropic funding in the US began to shift to respond to other social crises, and austerity policies in the UK saw a huge divestment from cultural institutions — leaving the projects that had bloomed in the early 2010s to wither. Technically skilled and experienced staff departed and many institutions had only the non-technical content creators in digital and technology teams, sometimes even leading them. Instead of more diverse skills complementing deep specialist technical knowledge, they simply replaced them — often at lower salaries and in less secure roles.

Those with any technical knowledge or experience know that infrastructure needs continual maintenance. Maintenance is unforgiving but is a necessary byproduct of any organisational innovation. Knowing exactly how much maintenance is going to be required by a new system or process requires skilled staff with a deep understanding of what has been made and why, and its lifecycle. If those staff have been let go, outsourced, replaced, then the amount of ongoing maintenance a system needs can be vastly misrepresented and misunderstood. Maintenance needs to be operationalised, and systems always worked on and adapted.

The nonprofit and public sectors always struggle with the artificial division between capital expenditure and operational expenditure. Capital is easier to raise from funders whilst operations are virtually impossible to secure increased funding for. It can quickly become attractive, in the short term, to seek outsourcing as way out. But once outsourcing begins, it starts an unstoppable process of skill erosion.

Sometimes new skills are needed quickly — as we saw during the mobile period — but in making a strategic shift to outsourcing for short term needs, there should be a concurrent upskilling of internal staff to effectively operationalize the new skills after the first outsourcing period is up. Ideally the outsourcing is used only to ‘bridge the gap’ — to open up time for training and understanding — not become the entirety of operations.

With such a high turnover of staff coupled with a flight of technical specialists from the sector, new people took up senior roles in the sector. Lacking an understanding of the experience of complex technical projects, faced with improving new infrastructure that they weren’t ‘responsible for’, and without knowledge or a curiosity for the specific contexts that the projects emerged from, it was easy to see the attraction of a tabula rasa approach. Unfortunately as new, sometimes non-museum leaders came into the field they actively ignored what had come before.

Now, in 2020, we are quite deep into a post-mobile, post-social era. We all have a much more complicated relationship with technology and commercial providers. Most of us aware of the privacy trade offs we are forced to make, not only in our own private usage of technology, but also in the choices we make for our institutions. Everything is political — and with the massive exodus of skilled, technical talent from the sector (along with many other non-technical specialists leaving too) — there are too few people with the depth and in-sector experience to help museums make sensible strategic technology choices without being beholden to third parties. Unlike previous periods of rupture, a decade of austerity politics in the UK, the turmoil in the US, has left the sector with multiple crises on all fronts. Technology probably is quite far down on the list of ‘most pressing museum crises’. Rightly so.

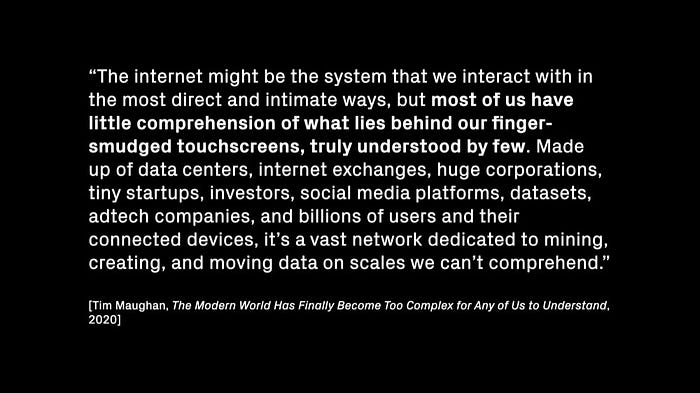

I really like Tim Maughan’s description of the situation — we are in with a world with such intricate but opaque technologies.

And then came COVID.

The consumerization of IT and the shift to ‘as-a-service’ systems and applications over the previous half decade meant that even for those institutions that had depleted their IT infrastructure and staff, the transition to work-from-home wasn’t too technically difficult. The ease of staff continuing to work remotely using Office365 masked the lack of technical knowledge. But this was a short term silver lining, for those very same ICT and technically literate staff who had been outsourced and let go in past years made any more significant ‘pivot to digital’ in terms of public engagement and new projects all that much more difficult.

It’s very challenging to pivot to a new domain of operation without in-house expertise. It’s even more challenging if museum leadership believes ‘technology is simple’ because there has been little or no interruption to their emails and nothing has ‘broken’. It’s a mirage of competence.

Add in the collapse of self-generated revenue (aka ‘ticket sales’), a collapse in internal confidence around which direction was the right way to go, as well as many of the smaller exhibition and cultural sector technology companies folding because of cash flow issues (and no certainty of near term new work), and it was the perfect storm.

And that’s even before the much larger effects of COVID in our communities and in the private lives of museum staff.

It is not going away.

Matt Locke recently articulated the added shift that COVID has brought, whereby an organization’s digital initiatives are brought under not a ‘digital strategy’ but a ‘remote strategy’. I like this formulation. It feels like the year 2000 again.

“I want to strongly suggest that this is different from having a ‘digital’ strategy — digital technologies might well be a part of how you deliver your remote strategy, but we need to think more about the social and cultural contexts of the home. For many building-based organisations — whether this is a central office, a shopping mall, a theatre, museum or cinema — this means considering a future in which the building is no longer the sole focus of your strategy. Instead of seeing remote services as a side hustle to the main purpose — getting people through the door — the future might be an even balance between remote and building based provision”

Looking back to the early discussions about the web, or even earlier to the arrival of computing in museums, and it was always about a ‘remote strategy’. We didn’t call it that — maybe the closest we got was ‘digital outreach’. What we lacked back then was a design language to describe and respond to the ‘social and cultural contexts’ of remote users. We have that now. And we have the technologies.

What concerns me at the present is whether we have enough technically skilled staff in the sector to sensibly develop, and more importantly, deliver on this opportunity.

Original draft version was part of Episode 57 of Fresh and New, a private thinking-in-progresss newsletter that covers art, music, technology, and whatever I am interested in in any given fortnight.